Selecting Creatives to Compare

You can initiate a comparison from two places:- From the Creative Library — select the checkboxes on two or more creative cards, then click Compare Selected.

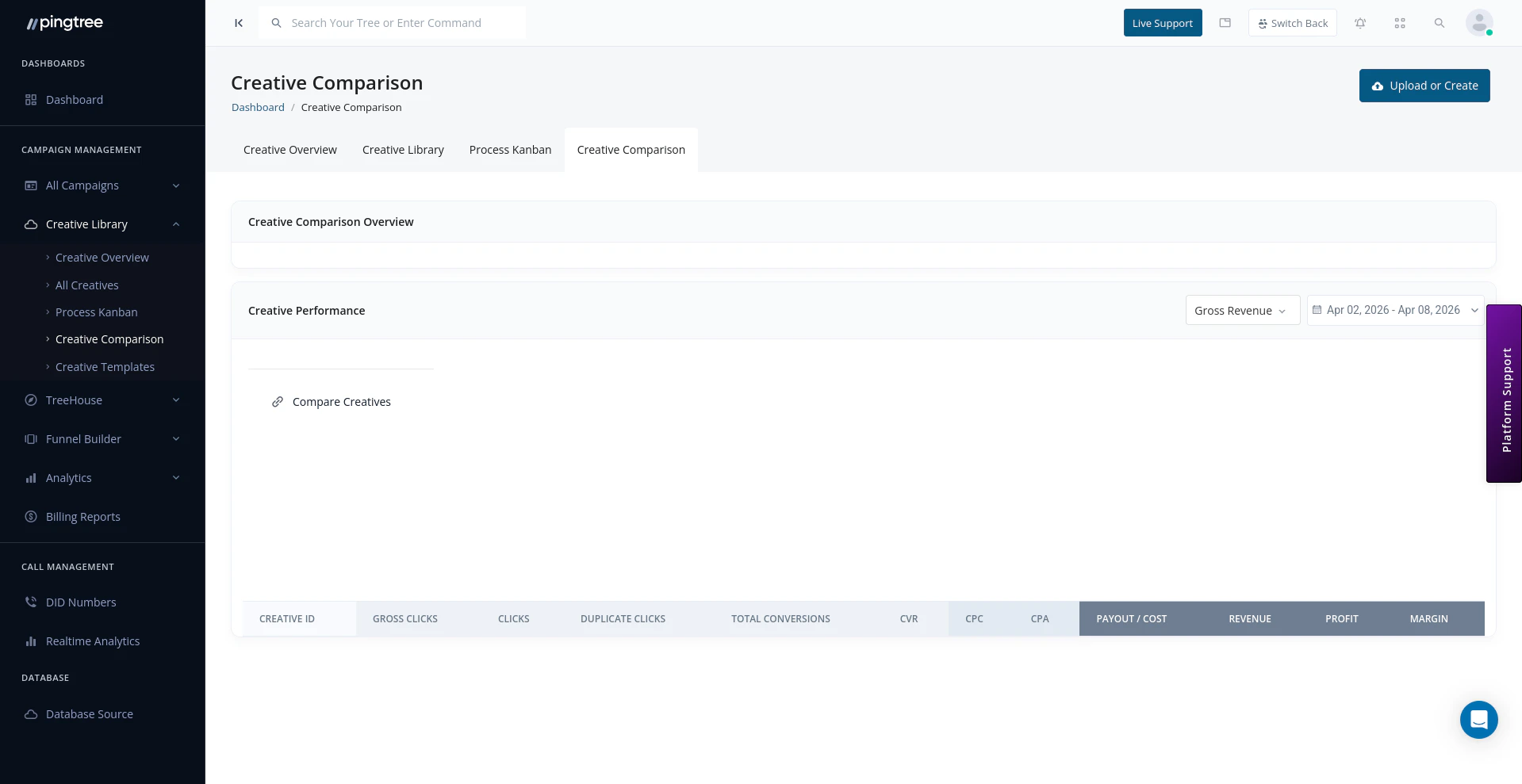

- From the Comparison page directly — use the creative picker to search and add creatives by name or ID.

Side-by-Side Performance Metrics

Once creatives are selected, their metrics are displayed in aligned columns so you can compare values directly.| Metric | Description |

|---|---|

| Impressions | Total number of times the creative was served. |

| Clicks | Total clicks generated by the creative. |

| CTR (Click-Through Rate) | Clicks as a percentage of impressions. |

| Conversions | Number of leads or conversions attributed to the creative. |

| Conversion Rate (CVR) | Conversions as a percentage of clicks. |

| Revenue | Total revenue attributed to the creative. |

| Cost per Conversion | Average cost to generate one conversion through this creative. |

Comparing Across Campaigns and Sources

By default, comparison metrics are aggregated across all campaigns and sources the creative has been used in. You can narrow the scope using the filters at the top of the comparison view:| Filter | Description |

|---|---|

| Campaign | Restrict metrics to a specific campaign. |

| Source | Restrict metrics to traffic from a specific source. |

| Date Range | Compare performance over a specific time window. |

Tip: If a creative performs well in one campaign but poorly in another, use the Campaign filter to isolate performance by context. The issue may be the audience or placement rather than the creative itself.

Selecting Creatives for A/B Testing

Once you have identified creatives you want to test against each other, you can designate them as an A/B test directly from the comparison view:- Select the creatives you want to test.

- Click Set as A/B Test.

- Assign the test to a campaign.

- Configure the traffic split (e.g., 50/50 or weighted).

- Save the test configuration.

Saving Comparison Selections

Comparison sets can be saved so you do not have to rebuild them from scratch each time you want to revisit a group of creatives.- Click Save Comparison and give the set a name (e.g., Q2 Banner Variants).

- Saved comparisons appear in the Saved Comparisons sidebar on the left of the page.

- Open a saved comparison at any time to reload the same creatives with fresh metrics for the current date range.

Tip: Save a comparison set after a creative review meeting so you can quickly pull it up in the next session and see how metrics have evolved since then.

Identifying Top Performers

The comparison view is particularly useful for evaluating similar creative variations — for example, the same ad copy with different imagery, or the same design in multiple sizes. Common use cases:| Use Case | How to Use the Comparison Tool |

|---|---|

| Choosing the best banner from a set of variants | Compare all variants side-by-side and rank by CTR or CVR. |

| Evaluating a creative refresh | Compare the old creative against the new one over the same date range. |

| Reviewing creatives before pausing a campaign | Compare active creatives to determine which ones to keep live. |

| Post-A/B test analysis | Load both test creatives into the comparison view and review final results. |

Tip: Sort the comparison table by Conversion Rate rather than clicks alone — a creative with fewer clicks but a higher CVR is often more valuable than a high-click creative that fails to convert.